Blog - AI in Politics

The Politics of Authenticity.

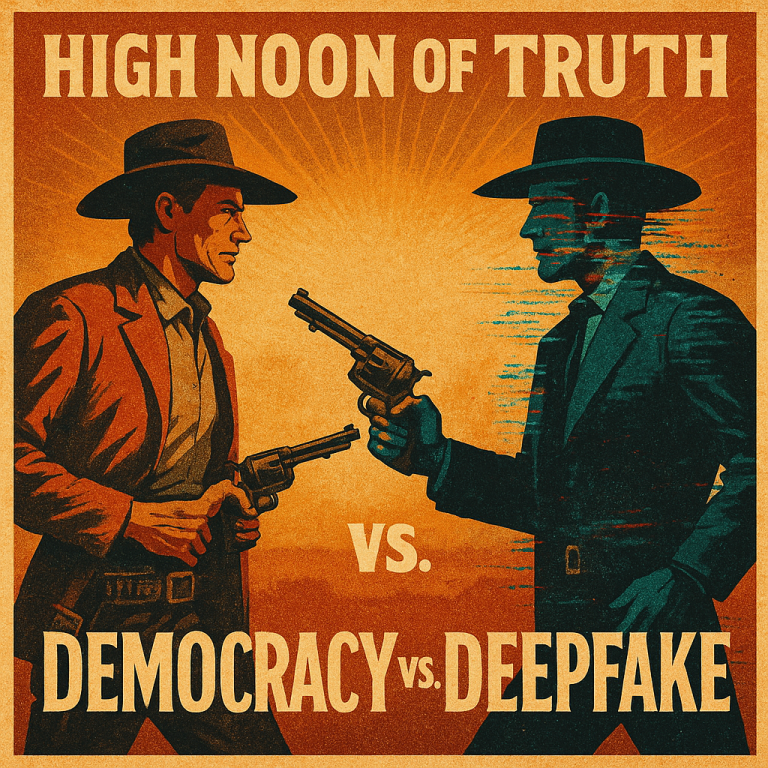

Democracy Will Die from Deepfakes - Unless It Implements Regulations for AI Authenticity.

By amedios editorial team in collaboration with our AI Partner

1. Democracy in the Age of Synthetic and Virtual Reality

The story of artificial intelligence has often been told as one of boundless progress. Computers that write poetry, can diagnose disease, design buildings, and power economies. But behind the dazzling headlines about generative AI lies a darker, more destabilizing force: its capacity to reshape, manipulate, and ultimately weaponize reality itself.

At the heart of this transformation is a new class of AI systems known as generative models. These algorithms are capable of producing human-like text, realistic images, convincing audio, and even entire videos from simple prompts. These systems, once the stuff of science fiction, are now embedded into everyday tools and consumer platforms. They enable extraordinary creativity and efficiency. But they also enable something else: deception at industrial scale.

This is where the concept of the deepfake enters the picture. A deepfake is a combination of “deep learning” and “fake”. A deepfake is a piece of synthetic media (e.g. a picture or a video) created by an AI system that convincingly imitates real people, events, or evidence. It can be a video of a politician saying something they never said, a fabricated confession, a counterfeit press conference, or a voice message that never existed. And as these models become more powerful and more accessible, the line between real and fake isn’t just blurring. It’s evaporating.

Deepfakes are not a curiosity or a fringe phenomenon. They are the natural byproduct of the generative AI revolution. Deepfakes are a predictable consequence of machines that learn to mimic human expression without understanding truth. And while they may seem at first like digital pranks or viral novelties, their implications are profound: they undermine the very epistemic foundations on which democratic societies depend.

Democracy, after all, is more than a voting mechanism. It is a shared agreement about what is real, a collective trust in the evidence presented before the public. Without that shared reality, everything else begins to unravel. Policy debates become meaningless. Accountability becomes impossible. Institutions lose legitimacy. And civic trust - the oxygen of democratic life - begins to suffocate.

We are, in short, entering a new political era where reality itself becomes a contested domain, and where the ability to generate convincing falsehoods is no longer limited to nation-states or intelligence agencies, but available to anyone with a laptop and a Wi-Fi connection.

2. How Deepfakes Weaponize Democracy

The danger of deepfakes is not simply that they deceive. It’s that they can be deployed strategically - precisely when and where trust matters most - to manipulate opinion, distort elections, discredit opponents, or destabilize entire governments.

In the hands of malicious actors, synthetic media becomes a political weapon that exploits the core vulnerabilities of democratic systems.

2.1 Elections Under Siege

No democratic process is more exposed to deepfake manipulation than elections. Campaigns run on information like speeches, interviews, scandals, and political debates. Deepfakes target that information environment directly. They can fabricate false statements, manufacture scandals, or create entirely fictional events - all timed to spread at the most damaging moment.

Consider the 2024 presidential election in Taiwan, one of the first major contests in which AI-generated content played a significant role. In the weeks leading up to the vote, fake videos and AI-generated audio clips flooded messaging platforms and social media. Some appeared to show candidates admitting to corruption; others fabricated inflammatory positions on China and national security. Even when debunked, these deepfakes achieved their goal: planting doubt and distorting public perception in the most critical moments of the campaign.

A similar incident occurred in the 2023 Slovak parliamentary election. Just 48 hours before polls opened, an audio deepfake surfaced online in which progressive candidate Michal Šimečka appeared to discuss vote-rigging tactics. It spread too quickly for fact-checkers or regulators to respond, and experts believe it may have influenced the final result. The lesson was chilling: up to now, the final days of an election were a period of intense democratic scrutiny in a world where disinformation can be fabricated on demand. From now on, these important days will become a nightmare. A free-for-all of synthetic AI content chaos.

2.2 The “Liar’s Dividend”

The power of deepfakes extends beyond the falsehoods they spread. Even when they’re exposed, they create a new political dynamic: the liar’s dividend. Once the public becomes aware that realistic fabrications are possible, everything becomes questionable, including genuine evidence. Politicians caught on tape can claim the footage is fake. Scandals can be dismissed as AI-generated hoaxes. Truth itself becomes negotiable.

This phenomenon is already visible in the United States, where campaign operatives on both sides have floated the idea of pre-emptively labeling damaging leaks or recordings as “AI fabrications” - true or not. The result is a profound erosion of accountability. If nothing can be trusted, then nothing can be verified - and political consequences become optional.

2.3 Deepfakes as Instruments of Statecraft

The threat doesn’t stop at domestic politics. Deepfakes are rapidly being incorporated into the arsenal of information warfare. They are used by state actors to shape narratives, sow division, and justify aggression.

Imagine a fabricated video of a NATO general confessing to war crimes, released days before a UN vote on sanctions. Or a fake news broadcast depicting a missile strike that never happened, designed to tank markets or justify military action. These scenarios are not hypothetical. Intelligence agencies have documented Russian and Chinese networks using synthetic media to influence elections, amplify propaganda, and manipulate geopolitical narratives.

In this emerging “synthetic geopolitics,” the truth is not merely contested. It is manufactured and deployed like a weapon. And because these operations are cheap, scalable, and difficult to attribute, they are likely to become a standard tool of statecraft in the coming decade.

2.4 The Democratic Fragility Problem

All of this leads to a deeper and more troubling consequence: the erosion of democratic legitimacy itself. Democracies depend on the ability of citizens to make informed decisions based on shared facts. If that foundation collapses, so too does the system built upon it.

Deepfakes exploit the very strengths of democratic societies like open communication, free media, pluralism and turn them into vulnerabilities. They poison public discourse, obscure accountability, and destabilize trust in institutions. They create a world in which no evidence is conclusive, no source is credible, and no political process is immune to manipulation.

This is not a future problem. It is happening now. It will accelerate as AI models become more powerful, more accessible, and more deeply integrated into our media environment. The same technologies that promise to automate industries, accelerate science, and unlock creativity are also enabling a new era of synthetic disinformation that could rewrite the rules of democracy itself.

3. Why Governance Matters – And Why It’s Failing

If deepfakes are the most disruptive byproduct of the AI revolution, then governance is its most underdeveloped response. While the ability to fabricate convincing falsehoods has advanced at lightning speed, the mechanisms designed to regulate, mitigate, and contain that power are moving at a glacial pace. The result is a dangerous imbalance: societies that are increasingly shaped by synthetic media and governments that remain fundamentally unprepared to deal with it.

The reasons for this governance gap are both structural and political. At the most basic level, the pace of technological change far exceeds the speed at which laws can be drafted, negotiated, and enacted. A deepfake can go from concept to viral distribution in hours; legislation takes months or years. Regulators find themselves legislating for a yesterday that no longer exists by the time their rules are passed.

But the problem runs deeper. Most democratic systems are built on deliberation and consensus. These qualities are strengths in normal times. They prevent abuse, balance interests, and protect civil liberties. But in a world where malicious actors can weaponize synthetic AI-based media to distort elections or incite violence in real time, this slowness becomes a liability. Democracies are, paradoxically, more vulnerable to deepfake manipulation precisely because they are more open and pluralistic.

Authoritarian states, by contrast, face no such constraints. They can impose sweeping content regulations overnight, mandate state-run verification protocols, and tightly control platforms. The result is a growing strategic asymmetry: open societies face existential risks from deepfakes, while closed societies exploit them as tools of statecraft.

There is also a deeper conceptual failure. Most existing regulatory frameworks are designed for misinformation - falsehoods spread by humans - rather than synthetic reality, which blurs the line between truth and fabrication entirely. Content moderation policies built for fake news stories or doctored images are ill-suited to a world where entire events, identities, and narratives can be fabricated on demand. This outdated mindset means regulators are often fighting the last war, while the next one is already being lost.

4. The Emerging Global Patchwork

Around the world, governments are slowly (too slowly) beginning to recognize the dangers of deepfakes and synthetic media. But the regulatory landscape is fragmented, inconsistent, and in many cases inadequate. The result is a patchwork of approaches that reflect differing political priorities, legal traditions, and strategic interests.

4.1 The European Union: Ambitious but Incomplete

The EU has taken the most comprehensive approach to regulating AI and synthetic media to date. The AI Act, expected to come into force in 2026, classifies deepfake technology as “high-risk” and requires companies to label AI-generated content clearly. The Digital Services Act (DSA) further mandates that large platforms mitigate systemic risks - including disinformation - and provide transparency about their algorithms.

There are also voluntary initiatives like the C2PA standard and the Content Authenticity Initiative, which aim to embed provenance metadata into media files. Together, these efforts signal a strong regulatory intent: make synthetic media traceable, label it transparently, and hold platforms accountable.

But the EU approach faces practical challenges. Enforcement will be slow and uneven across member states. Many of the technical solutions like watermarking and metadata can be stripped or circumvented. And the laws themselves were drafted before the explosion of multimodal AI models, meaning they may already be outdated when they take effect. Europe is leading, but it is leading with tools that may not be sharp enough.

4.2 The United States: Fragmented and Reactive

The United States, despite being home to most of the major AI companies, remains far behind in regulating deepfakes. There is no federal law addressing synthetic media directly. Instead, a patchwork of state laws - from California’s ban on election-related deepfakes to Texas’s anti-impersonation statutes - provides partial and inconsistent coverage.

Federal agencies are beginning to act. The Federal Election Commission (FEC) is considering rules on AI-generated campaign ads, while the Federal Trade Commission (FTC) has warned companies about deceptive synthetic endorsements. But these are piecemeal efforts. They are more reactive than strategic.

The deeper problem is political. Regulation of speech touches constitutional nerves, and lawmakers remain deeply divided over how to balance free expression with the need to protect democratic processes. As a result, the U.S. risks becoming the world’s largest exporter of deepfake technology — and one of its least prepared victims.

4.3 China: Authoritarian Efficiency

China has moved faster and more aggressively than any other major power. In 2023, it introduced sweeping rules requiring that all synthetic media be labeled, traceable, and registered with authorities. Platforms must proactively monitor and remove unlabeled deepfakes, and companies face severe penalties for violations.

The result is a regulatory environment that, while draconian, is also highly effective. China’s approach dramatically reduces the spread of unlabeled synthetic content and gives the state near-total visibility into its information ecosystem. But it comes at a cost: such systems are easily weaponized for censorship and state control. In Beijing’s hands, authenticity becomes not a safeguard for truth, but an instrument of power.

4.4 Asia-Pacific and Beyond: Global Coordination, Limited Impact

Many governments in the Asia-Pacific region are experimenting with domestic regulation. The initiatives range from Japan’s voluntary AI safety guidelines to Singapore’s “Model AI Governance Framework”. But the broader international response remains fragmented and mostly aspirational. Multilateral organizations have recognized the deepfake threat, but their current actions lack binding force and technical depth.

The G7’s “Hiroshima Process on Generative AI” (2023) was one of the first high-level attempts to address synthetic media risks at a geopolitical scale. It outlines a shared commitment among the world’s largest democracies to promote transparency, watermarking, and accountability in generative AI systems. However, it stops short of setting enforceable standards or shared verification requirements, leaving implementation to national discretion.

The OECD’s “AI Principles” (first adopted in 2019, updated in 2024) go one step further by explicitly acknowledging the dangers of synthetic content and disinformation. They call on governments and industry to build safeguards, promote transparency in AI outputs, and respect democratic values. Yet, like the G7 declaration, they remain non-binding guidelines. They are influential as a normative benchmark, but toothless when it comes to enforcement.

More recently, the OECD’s “Recommendation on Information Integrity” (2024) has focused directly on the erosion of public trust caused by manipulated and synthetic content. It proposes policy measures such as strengthening the resilience of information ecosystems, promoting provenance standards, and supporting independent verification hubs. These are promising ideas, but they remain consultative and voluntary in nature. No government is yet legally obligated to follow them.

The United Nations, through initiatives like UNESCO’s “Synthetic Content and Its Implications for AI Policy” (2023), has likewise sought to raise global awareness. The report emphasizes the cross-border nature of synthetic media, urging coordinated governance, better labeling standards, and ethical frameworks. Yet here, too, the gap between principle and practice remains wide.

Taken together, these initiatives reflect a growing global consensus that synthetic media represents a systemic risk to democratic governance, social cohesion, and the integrity of public information. But they also reveal the current limits of international cooperation: lots of shared concern, little enforceable action. Without binding standards, verification protocols, and legal accountability, the architecture of trust will remain patchy — and malicious actors will continue to exploit the gaps.

Who’s Doing It Right?

Each model reflects a different theory of governance and a different set of trade-offs:

- The EU is the most ambitious regulator, seeking to balance innovation and protection. But its complex frameworks risk being too slow and too easy to evade.

- China is the most effective in enforcement, but its methods are incompatible with democratic values.

- The U.S. remains the most fragmented, relying on litigation and state-level laws instead of comprehensive federal policy.

- Asia-Pacific democracies are the most collaborative, but also the most reliant on voluntary action.

None of these approaches, on their own, is sufficient. A sustainable solution will likely need to combine Europe’s regulatory ambition, Asia’s cooperative spirit, America’s innovation ecosystem, and (critically) strong democratic safeguards against the authoritarian temptation.

The Strategic Reality: Governance Will Shape the AI Era

The battle over deepfakes is not just about content. It’s about power. Whoever sets the rules for synthetic media will shape the future of information, influence, and democracy itself. And right now, those rules are being written in a fragmented, inconsistent, and reactive way.

The result is a world where malicious actors exploit regulatory gaps, where platforms face minimal accountability, and where trust continues to erode. If we are to build a future in which truth still matters, governance must evolve from playing catch-up to setting the pace.

This means thinking beyond compliance. It means designing legal frameworks for a world where reality itself is editable. It means treating content authenticity not as a niche technical issue, but as a fundamental pillar of democratic security. It is essential to 21st-century governance as free elections or the rule of law.

The age of deepfakes is also the age of governance. Without robust, agile, and globally coordinated policies, synthetic media will not just distort individual events. It will reshape the fabric of democratic life. The question is not whether we can regulate deepfakes. It’s whether we can do so fast enough to preserve the trust on which free societies depend.

5. The Trust Stack - Five Layers to Build a Global Authenticity Infrastructure

The global response to deepfakes and synthetic media has so far been characterized by piecemeal fixes and reactive measures. Watermarks, labels, and detection tools (while valuable) are ultimately patches on a far larger systemic wound. They attempt to treat symptoms without addressing the underlying condition: a digital ecosystem in which truth has no technical anchor.

If the first wave of solutions was about identifying fakes, the second wave must be about making authenticity verifiable by design. That requires shifting our thinking from “tools” to “infrastructure.” Just as societies once built physical systems to guarantee the safety of food, medicine, and currency, we now need digital systems that guarantee the integrity of information itself.

This is not an optional upgrade — it is the foundation upon which the legitimacy of democratic governance, markets, and institutions will rest in the coming decades. Without trusted information flows, elections become vulnerable, justice becomes unreliable, and economic transactions lose their legal basis. Authenticity is not a luxury feature of the digital age; it is its constitutional core.

Enter our recommendation: The Trust Stack

A New Architecture for the Age of Synthetic Media

To solve the deepfake crisis, we must stop thinking of “authenticity” as a single tool or feature and start thinking of it as an ecosystem. In the same way that a modern city depends on many layers of infrastructure (like roads, water, electricity, communications), the digital world will need a multi-layered trust infrastructure that works together to guarantee the reliability of what we see and hear online.

This system, which we call The Trust Stack, is built like a series of five layers. Each layer performs a different function, but together they form a powerful shield against manipulation. If one layer fails, the others can still hold. If all five work together, truth itself becomes part of the internet’s operating system.

1. The Origin Layer – Trust Begins at Creation

- Every photo, video, sound file or AI-generated clip must carry a digital fingerprint from the moment it is created. That means embedding cryptographically secure metadata, such as the time, location, and the device or software used, directly into the file.

- Like a passport stamp on a document, this fingerprint tells us where and when something was born, making later verification possible.

- Without this starting point, everything downstream becomes guesswork. Authenticity must begin at the source.

2. The Integrity Layer – Tracking Every Change

- Once content enters the digital world, it’s often copied, cropped, remixed, or edited. To maintain trust, we need a transparent and tamper-proof record of every significant change. Something like a chain of custody.

- Compare it to a police investigation: every piece of evidence must be documented as it changes hands. The same is true for digital media.

- This audit trail prevents malicious actors from secretly altering content and claiming it’s original. Every step becomes visible and verifiable.

3. The Verification Layer – Independent Proof of Authenticity

- A fingerprint and an audit trail are useful, but only if someone trustworthy confirms they’re real. That’s the job of verification registries: independent databases that timestamp content, confirm its provenance, and make it publicly checkable.

- Think of it like a notary or land registry for the internet. It acts as a neutral party that proves a document is what it claims to be.

- Trust should never depend solely on the creator. Independent verification turns authenticity from a private claim into a public fact.

4. The Distribution Layer – Platforms as Gatekeepers of Trust

- Even the best verification system is useless if platforms ignore it. That’s why social networks, search engines, browsers, and news aggregators must integrate authenticity data directly into how they rank, recommend, and moderate content.

- Just as airports screen luggage before allowing it on a plane, platforms should screen content before amplifying it, right?

- Verified content should rise to the top; suspicious content should face scrutiny. Distribution systems must become trust-aware, not trust-blind.

5. The User Layer – Trust Must Be Human-Readable

- Finally, none of this matters if ordinary users can’t understand it. Authenticity signals like verification badges, trust scores, or “reality filters” show only verified content. They make trust visible and actionable.

- A simplistic comparison: A nutritional label on food doesn’t stop contamination, but it helps consumers make informed choices.

- Transparency only works if people can see and use it. Trust should not hide in the metadata — it should live in the user interface.

The power of the Trust Stack lies in the combination.

No single layer can solve the deepfake problem on its own. But together, they form a resilient ecosystem: origin data establishes identity, integrity trails ensure transparency, verification registries provide authority, platforms enforce standards, and users make informed choices. This is how we design truth into the system — not as an afterthought, but as a foundational principle of the digital world.

6. Policy, Industry, Standards: The Governance Triangle

Building a global authenticity infrastructure is not the job of any single actor. It demands a coordinated effort across what we can call the Governance Triangle. See it as a three-way collaboration between governments, industry, and civil society.

Governments: Set the Standards and Enforce Them

States must legislate minimum authenticity requirements, mandate disclosure of AI-generated content, and ensure legal consequences for synthetic impersonation or fraudulent media. They should fund public verification infrastructure, establish interoperability standards, and integrate authenticity into election law, evidence rules, and public procurement.

Industry: Build Open, Interoperable Systems

Tech companies must embed authenticity protocols directly into their products, from cameras to content platforms. They should collaborate on open APIs, adopt shared metadata standards, and commit to transparency around synthetic media generation. Corporate secrecy cannot come at the expense of societal trust.

Consortia and Civil Society: Guard the Commons

Independent bodies like standards organizations, academic institutions, and NGOs, play a crucial role in auditing systems, monitoring compliance, and advocating for human rights safeguards. They ensure that authenticity infrastructure serves democratic ends, not surveillance or censorship.

This triangle must operate in lockstep. If one side fails, the entire system collapses. If e.g. governments legislate without technical input, or if industry builds without democratic oversight, we are all doomed. Trust infrastructure is a public good, and it must be treated as such.

Trust will act as a Strategic Asset.

In the 20th century, nations competed on military power, industrial capacity, and financial influence. In the 21st, they will compete on trust. States that can guarantee the authenticity of their information environments will enjoy more resilient democracies, more stable economies, and more credible diplomacy.

For businesses, too, authenticity will become a differentiator. Verified media will be more valuable than unverified content. Consumers will gravitate toward brands that guarantee transparency. Investors will reward companies with strong authenticity governance. Trust will shift from a defensive posture to a proactive source of strategic advantage.

This is not an abstract prediction. It is already happening. Media organizations are branding stories as “verified,” platforms are experimenting with authenticity filters, and financial regulators are exploring provenance requirements for ESG reporting. In a world where anything can be faked, the ability to prove authenticity becomes a source of power.

6. Policy Roadmap 2025–2030 - A Policy Roadmap for the AI Deepfake Age

The previous chapters outlined what a trustworthy digital future could look like. We sketched the architecture of trust - a multi-layered system of cryptographic signatures, immutable audit trails, distributed verification registries, and user-facing authenticity signals. This framework shows what must exist if democratic societies are to preserve a shared sense of reality in the AI era.

But architecture alone will not save us. Blueprints don’t build themselves. Without political will, legal frameworks, and coordinated action, even the most elegant solutions remain ideas on paper.

The uncomfortable truth is this: if we do nothing, the deepfake era will not merely distort information. It will corrode the foundations of democracy itself. Trust in elections, journalism, public institutions, even in one another, will fragment. What follows is not a dramatic collapse but a slow unraveling: a society where facts are negotiable, accountability is impossible, and manipulation is the norm rather than the exception.

That outcome is not inevitable, but avoiding it requires intention. We must move from theory to practice, from principles to policy. And that means treating authenticity infrastructure not as a niche tech project, but as a public good: something to be built, regulated, maintained, and defended just like transportation networks, power grids, or national security systems.

This chapter is therefore not a wish list, nor a prediction. It is a strategic policy proposal; a roadmap that translates the layered trust architecture into a sequence of concrete, achievable steps over the next five years. It is designed as a guide for lawmakers, regulators, industry leaders, and civil society actors who recognize that this challenge cannot be solved by technology alone.

Each stage builds upon the last: from public awareness and voluntary standards, to mandatory transparency requirements, interoperable global registries, and finally, enforceable governance frameworks. Together, these milestones form a path from today’s reactive patchwork to tomorrow’s robust authenticity ecosystem — one capable of protecting truth itself in the age of synthetic media.

Here’s how governments, regulators, and industry leaders can turn this vision into reality within a 5-year plan. Year by year, with specific steps and clear objectives.

Year 1: Awareness and Foundations

The first phase is about building a shared understanding of the threat and establishing the minimum technical and policy groundwork. Governments, platforms, and industry must begin speaking a common language about authenticity and lay the foundations for what will follow.

- Launch nationwide public awareness campaigns on deepfakes and synthetic media.

- Fund research into detection, provenance technologies, and trust infrastructure.

- Create initial voluntary guidelines on AI-generated content disclosure for major platforms and media outlets.

Year 2: Standards and Voluntary Adoption

Once awareness is established, the focus must shift toward defining the rules of the road. This is the year in which voluntary standards and industry self-regulation start to shape expectations and where technology providers integrate authenticity by design.

- Develop and publish open technical standards for content provenance and metadata (building on initiatives like C2PA).

- Incentivize voluntary adoption of provenance tags and watermarking by media companies, platforms, and AI developers.

- Support cross-sector pilot projects to test interoperability of authenticity tools across borders and platforms.

Year 3: Legal Anchoring and Transparency Requirements

With standards maturing, policymakers must move from encouragement to obligation. Transparency becomes the baseline: platforms, AI providers, and content producers must disclose when, how, and by whom synthetic media is generated.

- Pass national legislation requiring clear labeling of AI-generated content in political advertising, public communication, and news media.

- Mandate transparency reporting for major AI platforms, detailing detection methods and provenance adoption rates.

- Establish legal liability frameworks for malicious synthetic media creation and distribution.

Year 4: Infrastructure and Global Interoperability

As regulation tightens, attention turns to building the infrastructure that makes authenticity scalable and global. Cross-border cooperation and interoperability become essential — because deepfakes ignore national boundaries.

- Launch government-backed or multilateral authenticity registries that verify and timestamp digital content.

- Require platforms and major media outlets to integrate authenticity verification APIs into their services.

- Negotiate international agreements on interoperability and data-sharing to track cross-border synthetic media activity.

Year 5: Enforcement, Accountability, and Trust by Default

In the final phase, authenticity is no longer optional. It has become part of the digital fabric. Enforcement mechanisms, liability structures, and governance models ensure that the trust infrastructure remains resilient, adaptable, and universal.

- Implement penalties for non-compliance with authenticity standards and provenance requirements.

- Establish independent oversight bodies to audit and certify authenticity systems and provenance technologies.

- Expand verification mandates beyond media to include financial services, critical infrastructure, and government communication.

This roadmap is not about slowing technology down. On the contrary, it’s about anchoring it in trust. With each step, we move from reactive firefighting toward proactive governance. By 2030, authenticity can be as fundamental to the digital world as identity documents are to the physical one.

An Outlook: Trust Will Define the AI Century

The coming decade will not be defined by who builds the most powerful AI models, but by who builds the most trustworthy information systems. Deepfakes are not just a technological challenge. They are a governance challenge, a societal challenge, and ultimately a civilizational one.

We are witnessing the rise of a new geopolitical fault line: those who can anchor truth in the digital world, and those who cannot. The countries and companies that succeed in building authenticity infrastructure will shape not only the future of information, but the future of democracy itself.

Authenticity is no longer an optional feature of the digital ecosystem. It is its operating system. Governments, platforms, and enterprises must treat it as a national security priority, an economic necessity, and a democratic imperative.