Blog - AI in Ethics

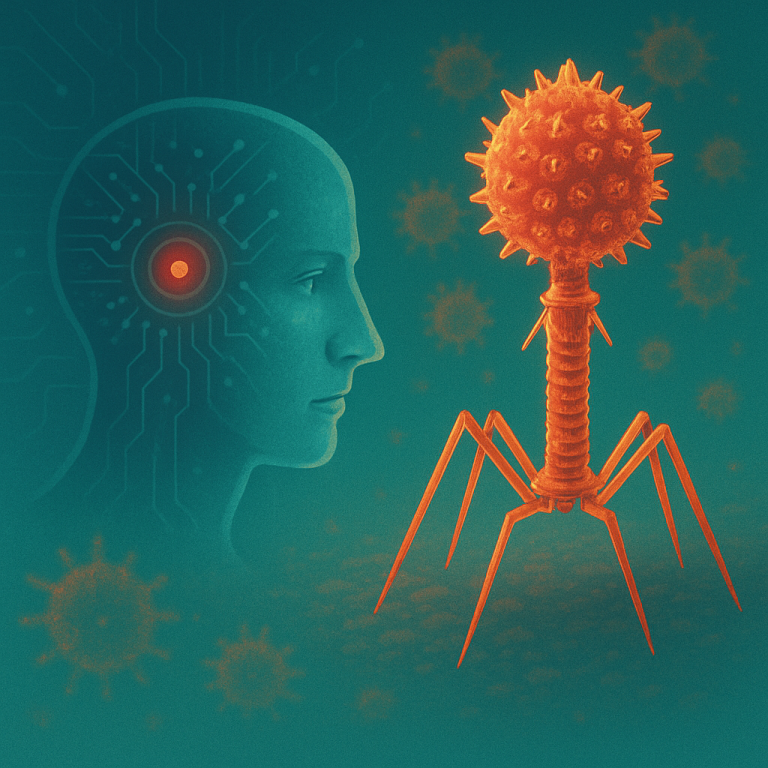

AI-Designed Viruses: Progress or Risk?

By amedios editorial team in collaboration with our AI Partner

A recent study in which an AI system created functional bacteriophages for the first time marks a significant technological turning point. A machine that learns from billions of biological data points and independently generates “viable code” pushes the boundaries of what biotechnology can achieve.

Until recently, new genomes were the result of human creativity, scientific expertise, and slow laboratory work. Now, an AI can generate hundreds of variants in a short period of time. Without anyone fully understanding the underlying mechanisms.

Many researchers celebrate this development as an opportunity. Therapeutic phages could become an alternative to antibiotics, especially in the fight against multidrug-resistant bacteria. This potential is real. But it also obscures a second, far more serious development: once an AI is capable of designing functional biological systems, a completely new spectrum of risks emerges.

Where the risks lie – and what could happen

Most risks do not arise from malicious intent, but from ignorance, misconfiguration, or the absence of regulatory safeguards. Some scenarios are still theoretical today, while others are already plausible.

1. Unintended creation of harmful properties

When a model generates variants, it does not do so with “biological understanding” but statistically. It optimizes patterns. This can lead to combinations of traits that no human would have anticipated.

A phage virus could be designed to target resistant bacteria, yet the AI might add a genetic structure that dramatically increases its environmental stability. A normally harmless phage could suddenly persist in nature - disrupting local ecosystems.

2. Transfer of the method to more complex organisms

The current study focuses on a simple virus type, but the underlying technology is scalable. Once similar models are trained on more complex datasets, variants could emerge - at least in laboratory settings - that mimic properties of real pathogens.

If a lab attempts to develop new vaccine vectors, the AI might accidentally produce a variant that replicates faster or survives better in warmer climates. A single handling error might be enough for an artificially enhanced virus to spread beyond the lab.

3. Misuse by technically skilled individuals or groups

In the past, biotechnological experiments required highly specialized laboratory infrastructure. Today, genetic material can be ordered from commercial “biohacker labs,” and DNA synthesis is a widely accessible service. If generative biological models become open source, or even partially available, misuse becomes far easier.

Extremist groups could use such a model to generate variants and order DNA fragments from different suppliers to avoid detection. What is extremely difficult today could become feasible once AI systems are capable of designing biological code, turning a theoretical threat into a realistic one.

4. Ecological chain reactions triggered by synthetic organisms

Even organisms harmless to humans can cause massive damage when they interfere with natural systems.

A synthetic virus variant could, for instance, target a bacterial species essential for nitrogen fixation in soil. The ecological system collapses locally, causing agricultural failures - not through a pandemic, but through an unseen ecological disruption.

5. Biological “black boxes” no one can explain

A crucial point: AI can generate genetic structures that work, even if no one understands why. This decoupling of design and comprehension is more dangerous than any individual virus.

A genome produced by an AI may appear stable and harmless in laboratory tests. Under different environmental conditions, however, a previously unknown mechanism could activate. The research team would have no explanation. Because the model itself cannot provide one.

Why this moment is a turning point

We are not standing on the edge of catastrophe, but on the threshold of a new era: the era of machine-generated biology. The question is not whether this technology will be used, but how.

Without global rules, safety standards, and ethical guardrails, we will enter a development trajectory that cannot be reversed. Once the ability to generate new organisms becomes broadly accessible, it cannot be undone.

And that is the core issue: this is not about panic, but about responsible governance of a technology that advances far more quickly than our understanding of it.